|

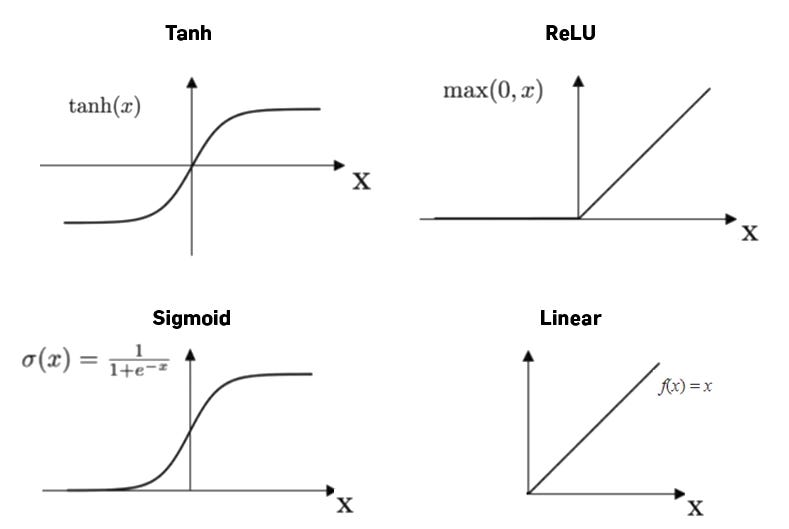

TanH compress a real-valued number to the range. The network either refuses to learn more or is extremely slow.The output value is between zero and one, so it makes optimization harder. Its output isn’t zero centred, and it makes the gradient updates go too far in different directions.Sigmoid function gives rise to a problem of “Vanishing gradients” and Sigmoids saturate and kill gradients.As a result, we’ve defined a range for our activations. The output of the activation function is always going to be in the range (0,1) compared to (-∞, ∞) of linear activation function.It has a smooth gradient too, and It’s good for a classifier type problem. Combinations of this function are also non-linear, and it will give an analogue activation, unlike binary step activation function. This sigmoid function gives the probability of an existence of a particular class. This is mainly used in binary classification problems. It’s simple to use and has all the desirable qualities of activation functions: nonlinearity, continuous differentiation, monotonicity, and a set output range. Sigmoid accepts a number as input and returns a number between 0 and 1. The remainder of this article will outline the major non-linear activiation functions used in neural networks.

These activation functions are mainly divided basis on their range and curves. Any output can be represented as a functional computation output in a neural network. Non-linear activation functions allow the stacking of multiple layers of neurons, as the output would now be a non-linear combination of input passed through multiple layers. They make it uncomplicated for an artificial neural network model to adapt to a variety of data and to differentiate between the outputs. The non-linear activation functions are the most-used activation functions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed